-

The other day, Hardware Unboxed showed how NV GPUs eat up more CPU cycles for a given level of FPS/perf, and it was assumed that it only occurs in DX12 & Vulkan, so there's some "bug" with the drivers.

But this happens in CPU intensive DX11 titles as well.

Example.

Probably many other titles too. The reason it was less obvious during the DX11 era is that not many games were very CPU intensive, so there was always extra headroom for NV GPUs to tap into without causing perf loss or stutters. Combined with reviewers always testing GPUs with the max CPU they have, also hides this problem.

So if you're still on older CPUs because you're like me and a tight ass that hates to waste hardware by frequent upgrades, think twice when you decide the next GPU.

ps. There's also some DX11 games that are NV sponsored ports, that genuinely seem to be CPU bottlenecked on AMD GPUs, and this is another issue, due to AMD's primary thread scheduling requirements, and these games tendency to slam more logic or PhysX onto the render thread choking AMD GPUs.

-

When using D3D11 the Nvidia driver will fully utilize a second CPU core in addition to the CPU core calling D3D11, which probably contributes to their performance advantage in single threaded D3D11 games. I have not seen this behavior in D3D12 or Vulkan.

It is kind of surprising that their D3D12 driver has significantly higher overhead than AMDs D3D12 driver.

ID: gqx0r3lID: gqyanxvI haven't dug details, so I am just speculating, but it is possible Nvidia made some hardware design decisions that require more CPU cycles to set up things for the GPU. They have done this in the past. I still recall the pain of programming the PS3 GPU (G70/G71 based) where the pixel shaders didn't have a constant store, so you had to patch pixel shader constants directly into the pixel shader microcode (often in multiple places), which resulted in terrible performance if you did it on the CPU. The solution was to use the Cell processors to do the patching with some tricky synchronization between the CPU, Cell processor, and GPU.

ID: gqxib65Mantle lead the way to Vulkan, not DX12.

-

Please add this video to your post, as HUB linked it in their pinned comments. It explains why the Nvidia driver works the way it does:

TL:DW in order to get an advantage in early DX11 games, Nvidia made their scheduler software based meaning they could 'convert' mostly single threaded games to utilize more cores for draw calls. AMD at the time had a hardware scheduler that was much more optimized for async compute but games didn't use it much.

Fast forward to today and games are much more multi core dependant, and now the software scheduler for Nvidia creates driver overhead because there is a few losses in doing the conversion instead of just taking the draw calls directly as AMD does.

This is a gross oversimplification and I'm probably wrong on something so make sure to watch the video.

-

Unless something has changed I'm pretty sure Nvidia does better in DX11 than AMD when it comes to driver overhead while the opposite is true for low level APIs.

ID: gqwv03iThey used to do better in many DX11 titles despite the overhead. The overhead has been there since Kepler. They removed the complex scheduler after Nvidia Thermi series.

ID: gqy5arwNvidia released their DX driver for optimising draw calls, a bit after AMD announced Mantle. The Nvidia driver is using more CPU in DX11/10/9 to keep the GPU busier.

That's a plus. It's a problem when games become better and Nvidia doesn't adapt.

I wonder what changing "Threaded Optimization" would do to modern titles like the ones tested by HWUB.

-

this overhead behavior (at least in DX 11) has been known for quite a while

video from ~4 years ago:

what wasn't known was that Nvidia has sizable driver overhead in DX12 across multiple GPU generations since Nvidia has had hardware scheduling available in their GPUs since Pascal

ID: gqybe6qAll GPUs have a hardware scheduler. This is common misunderstanding.

It's just that NV since Kepler, has moved a few functions of prior HWS to their driver to simplify the hardware design, saving them some transistors & less power use, at the expense of using some CPU cycles.

-

This also affected DX9 games I used to bring this up in the past

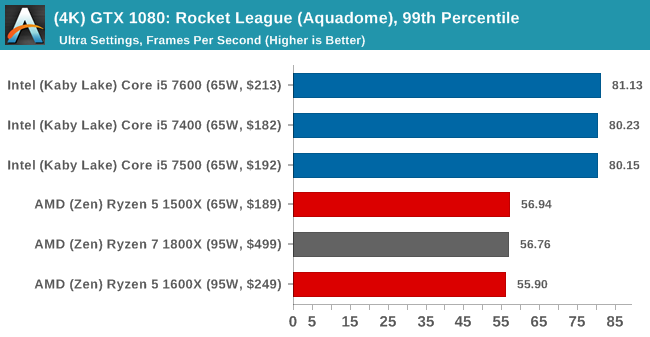

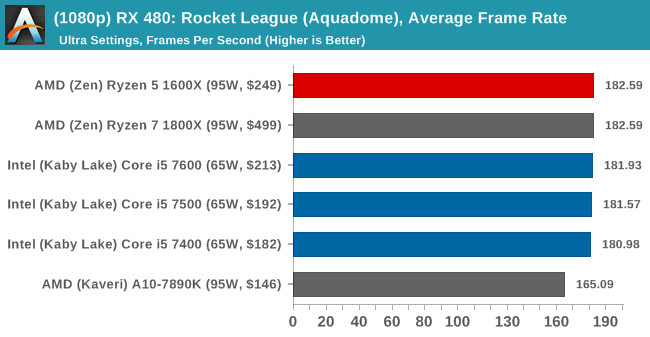

Even at 4k where CPU bottlenecks should be lower the GTX 1080 was getting 81FPS with the Intel CPU and 56fps with Ryzen 1000 series

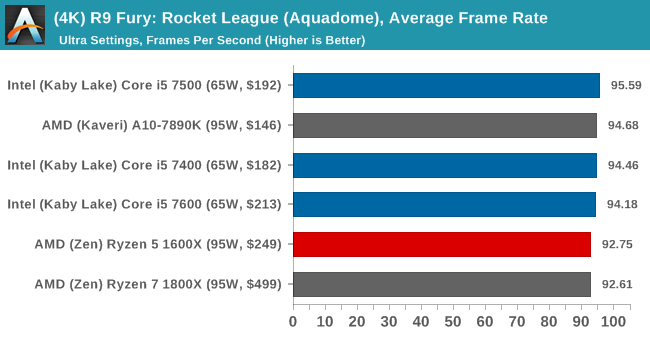

On the Fury AMD had 96fps on the Intel CPU and 95FPS on the Kaveri A8 7890k as the CPU

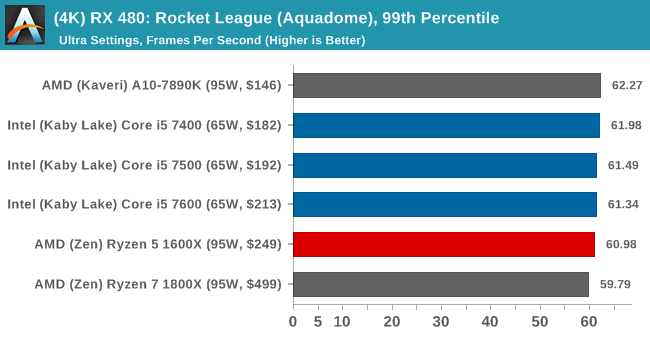

Even the 480 was beating the 1080 even at 4k in this title on non skylake CPU's due to this overhead

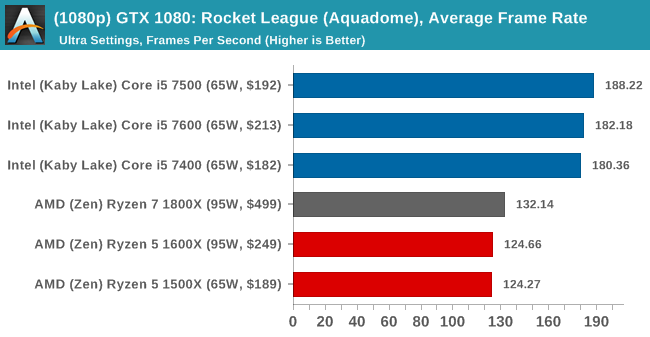

At 1080p you went from 180 on Intel CPU's to 130 on AMD CPU's on GTX 1080

The Radeon 480 kept 180 on all CPU's until you go to Kaveri series where it dropped to 165

-

I guess this is HUB new focus. It's funny because AMD users complained about driver overhead in dx11 for years and most people either pretended it didn't exist (some bs about GCN cache), or said it didn't matter (1080p on a fury x isn't important).

After one nvidia video, it's gospel, and now people shouldn't buy nvidia cards. Where was this level of reporting then?

ID: gqwhixvFrom the video it seems they accidentally stumbled upon the problem, it's not something they set out to find. Reviewers typically pair all cards with high end CPUs in order to avoid bottlenecks on that side. It's the testing methodology not some conspiracy theory.

ID: gqxedm9I think this is showing itself after cpu got a massive jump in computing power cheaply. Now 6 cores is considered a sweet spot. Games are evolving fast and finally nvidia software magic is feeling the pinch of trying to actually optimize it with the ever changing cpu landscape.

Doing so left the trail of forgetting about those people with slower cpus..

ID: gqwh76qcorrect me if I'm wrong, but there wasn't a driver overhead for amd gpus, some game engines ran rendering tasks in a single thread (in dx11/opengl), which would often stall and kill the framerates. nvidia fixed this in their drivers, which is an achievement in itself, but amd didn't want to or couldn't, ultimately this was a limitation of amd's hardware. that was the whole point of mantle/vulkan/dx12.

no one is saying that people shouldn't buy nvidia cards, that's ridiculous. but if you buy an expensive card (& pair it with an old cpu), you will get lower performance in certain situations.

ID: gqwn80vThe HUB tweet references a 1660ti in a dx11 game. Not an expensive gpu (normally). Further in the tweet chain they claim nvidia has a overhead issue in dx11 while referencing BF5. Not one mention of AMDs dx11 problem.

I already see where this is going. It's more of a critique of HUB than anything.

ID: gqwka6opretended it didn't exist (some bs about GCN cache), or said it didn't matter (1080p on a fury x isn't important).

No one pretended it didn't exist, this sub was full of threads discussing driver overhead back in the GCN days pre-DX12. Not every situation where AMD GPUs underperformed was due to driver overhead though.

I think a lot of performance disparity was misattributed to AMD's driver overhead when in fact it was more to do with the GPU architecture being quite limited in certain scenarios and not being particularly scalable, and also a general lack of multithreading in games.

Features like async compute were present in GCN since GCN 1.0 but were not used in games until DX12 came along. That was performance left on the table for years.

ID: gqxrbncand also a general lack of multithreading in games.

Only on AMD, nVidia had full DX11MT.

ID: gqwoa9sCertain influencers swore it didn't exist and it was never covered by HUB or any popular outlet. My criticism is that if HUB is going to compare Radeon to Rtx, and start jumping into dx11 games, they shouldn't make be sweeping statements based on BF5 and a 390/1660ti.

ID: gqwysv7When people bitched I corrected them with benchmarks even DX9 games showed this behavior

At 1080p you went from 180 on Intel CPU's to 130 on AMD CPU's on GTX 1080

The lower end Radeon 480 kept 180 on all CPU's until you go to Kaveri series where it dropped to 165

If you played Rocketleague & League of Legends only at 1080p the 480 was a faster GPU in those titles than the GTX 1080 even at 4k or above which is laughable.

ID: gqy4xgsDamn. Sucks that most of us have never heard about this before now

ID: gqx1n0aThis is not a new focus, it's feedback after the video from people who watched it and want to bring HUB's attention to things that are related to that video. And HUB is doing its due diligence with that viewer feedback, as it should.

ID: gqxe0viI don't know what you're talking about. Sorry.

ID: gqx4cdvWho is saying you shouldn't buy Nvidia because of this? There has been plenty of crap learned about both sides of you pay enough attention. Why does it matter more when Nvidia does it? Because they have 80% of the market share so it affecting many more people. It would not appear many people need an excuse to not get AMD.

-

Has there been any tests done after disabling the "threaded optimization" option in the Nvidia control panel?

-

So is this only present in the turing and ampere GPUs or is Pascal also subject to it?

-

This is literally years old information...

-

How the Nvidia driver works hurt some games, but benefits others disproportional more, and it's not a bug. Pick your poison - quality of life wise the pros outweigh the cons still on Nvidia compared to AMD IMO.

ID: gqy5tajIt's wonderful you're being down voted, and that also nobody mentions the Threaded Optimisation setting in the Nvidia drivers.

ID: gqwfn5pHardware Unboxed where fairly clear in there video and linked to this one

'AMD vs NV Drivers: A Brief History and Understanding Scheduling & CPU Overhead'

no bugs, seems clear to me. the AMD hardware setup is better, dedicated silicon to do work is always faster than general compute (why do we use GPU's if there not better than a CPU).

Im not saying there GPU's are faster, just that it's a better setup.

Iv used both brands & both work fine, chose what's best for your use case.

if you play game 'x' and it's faster on brand 'x' and cost is the same then you know what to do.

The big plus for the AMD setup is if like me you tend to upgrade the GPU a few times in each computer then as your CPU ages the AMD GPU will relay give you an edge.

PS it's not just an 'AMD' setup for the GPU, SONY must have had a big hand in it & my god with the puny CPU's in the PS4 this must have helped so much!

just think if there was the driver overhead on the PS4 CPU as well as the game...

no way the system will have lasted as long as Sony wanted, they relay must have looked years down the road and planed for it in silicon.

ID: gqwqxp1This is wrong though. Out of gcn and fermi, fermi ACTUALLY had a real hardware scheduler. GCN did not. Fermi was a fully capable setup in comparison. All modern GPU's have hardware scheduling they cannot work without it. This is asinine and he does not fully understand what he's reviewing. It's gone for a reason though. It used too much power.

This mentions the hardware "warp" scheduling that still exists and the same "wave" scheduling on navi. Both of them are simply not as capable as fermi. It's got the same name even gigathread engine. There is a warp/wave scheduler per sm/cu...

ID: gqwzgkzWhile I do agree with this main point its not really fair to say its a better setup.

This takes up huge die space to have a complex hardware scheduler. If Nvidia did this complex hardware scheduler they would reduce overhead however the GPU's would either

a) have less cores

b) have bigger die sizesID: gqw6ytrYeah. Their ability to rewrite shaders and game's code on the fly is a huge asset.

And that overhead isn't THAT large. I mean, older 4/4 or 4/8 cpus are not able to deliver 60 fps in modern games (like bf1 or mh world) regardless of overhead. Neither do i3/r3.

ID: gqw7qpt. I mean, older 4/4 or 4/8 cpus are not able to deliver 60 fps in modern games (like bf1 or mh world) regardless of overhead. Neither do i3/r3.

Look a the vid linked. 4c/8t runs bf1 or bfv MP fine on Radeons, with >100 fps & smooth gameplay.

Heck, it wasn't long ago that the 7700K was beast mode for gaming. It still is on Radeons.

-

Told ya

-

think twice when you decide the next GPU.

Comes up to what you want.

Rasterization perf

or Features.

-

Just more and more reason to permanently avoid novideo. Now we have factual objective proof that they purposefully nuke your performance while charging you more. Just more amoral unethical bullshit from the GPU market's leading scumbag.

ID: gqyk9jkAmd shill strikes again

-

Are we going to use random YouTube videos now in constructing an argument? From that video it does not seem like the comparison was as like for like as possible so it is impossible to come to conclusions about driver overhead being higher on NVIDIA over AMD.

The fact is that in games where the main thread that issues draw calls is left to one core without other threads interfering then AMD and NVIDIA have the same driver overhead. It is in games where the draw call thread has to compete with other threads on the same core that NVIDIA pulls ahead because they implement command lists in their driver while AMD doesn't.

EDIT: Why the downvotes? See for yourself -

Sekiro

RX 570 + i3 9100F It cant hold 60 FPS.

GTX 1060 + i3 9100F Holds a steady 60 FPS.

GTA V

RX 570 + i3 9100F 84 FPS.

GTX 1060 + i3 9100F 101 FPS.

ID: gqx5dtnI'm pretty sure he mentioned the video to include with his conclusion.

Same setup just GPU change.

R9 390, was 100% utilized, and cpu hovered 60-70% while 1660 was 70% utilized while cpu was 70-80%

There definitely is some fuckery going on.

ID: gqx787nHow do you trust random YouTube videos to give accurate results unless you can be sure of their testing methodology?

ID: gqy292bAre you joking? Ofc the 570 will be slower!

RX 570 is not the 1060 competitor, it is a tier lower.

The RX 580 is the 1060 competitor.

If you're gonna do GPU perf comparison at least get one thats of the same tier or class.

And no, this isn't just random. HUB did some DX11 testing, results on his patreon. He will do a full video soon covering many more games as his viewers asked for more testing.

ID: gqxhk60I like how OP blames nvidia sponsored games for AMD driver shortcomings in the closing paragraph. That's all you need to know about this recommendation.

引用元:https://www.reddit.com/r/Amd/comments/m4sove/fyi_the_driver_overhead_of_amd_nv_gpus_also/

AMD worked on mantel which lead the way to DX12, I guess you could say they had some experience.